At the CSIR, our research scope encompasses a diverse range of sectors where our role is either as a partner or as a technology and innovation solutions provider. Our highly competent staff and state-of-the-art infrastructure provide our partners with access to leading minds and facilities to help organisations deliver on cutting edge research and technological innovation, offering tangible solutions to immediate problems.

SOLUTIONS

What We Do

To solve complex problems, organisations are required to take on multidisciplinary approaches.

Agriculture and food

Go somewhere

Agriculture and food

Go somewhere Built environment

Go somewhere

Built environment

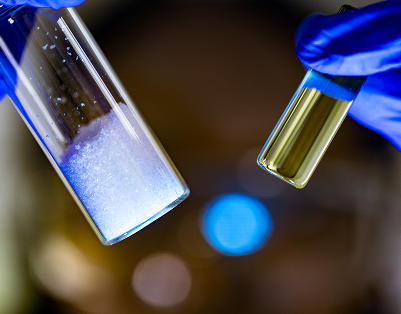

Go somewhere Chemicals and materials

Go somewhere

Chemicals and materials

Go somewhere Defence and security

Go somewhere

Defence and security

Go somewhere Digital solutions and services

Go somewhere

Digital solutions and services

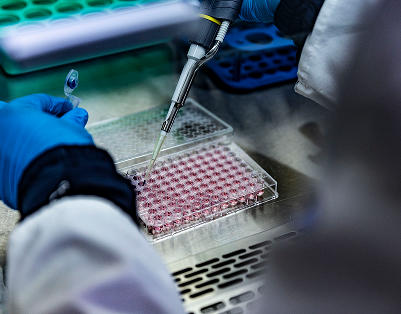

Go somewhere Health

Go somewhere

Health

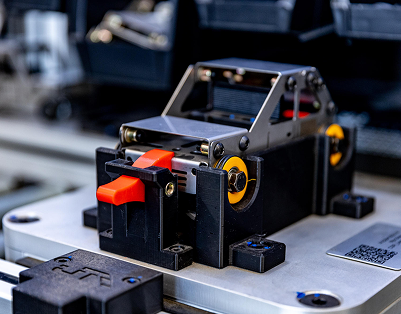

Go somewhere Manufacturing

Go somewhere

Manufacturing

Go somewhere Mining

Go somewhere

Mining

Go somewhere Mobility and logistics

Go somewhere

Mobility and logistics

Go somewhere Natural environment

Go somewhere

Natural environment

Go somewhere